What I learned about AI in healthcare at Huawei Singapore TechWeek

From 3D hologram-guided surgery to AI models that fail across populations, the sessions were eye-opening.

A surgeon in Singapore overlaid a 3D hologram of a CT scan directly onto a patient, then successfully removed a tumour using it. I saw the footage today at Huawei Singapore TechWeek, where I sat in on sessions covering intelligent healthcare, AI localisation, and extended reality in surgery.

Here's what stood out.

The state of intelligent healthcare

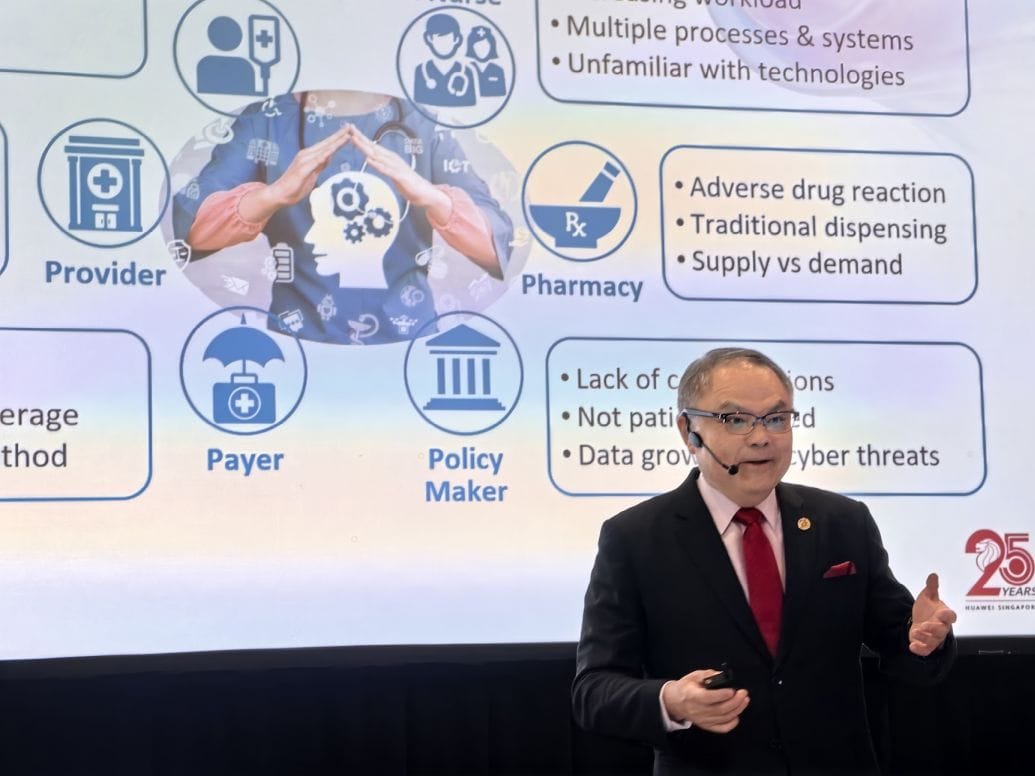

Hong-Eng Koh of Huawei set the scene in his opening keynote with a striking data point: a 500-bed hospital generates around 1PB of data a year, with archival requirements stretching 10 years or more. That's a massive storage challenge on its own, before AI even enters the picture.

What surprised me was where hospitals are actually using AI today. It's largely in operations rather than clinical care, covering areas such as documentation, workflow optimisation, scheduling, and reducing staff burnout.

So while the technology is proving its worth, it is functioning mostly behind the scenes. Or put another way, there's still some way to go before AI arrives at the bedside in a meaningful way.

Photo Captions: (Left) Koh Hong Eng. (Centre) Prof Gao Yujia.

Why AI models need localisation

One of the more eye-opening insights was how AI models don't transfer across populations without retraining. Hong-Eng gave two anecdotes that made the point vividly.

Mammogram models trained on European women didn't work well for Asian women due to different body proportions. And skull X-ray models developed in Europe failed in Brazil because of differing skull shapes.

The implication is clear: global models are a starting point, not a solution. Secondary training with local data is non-negotiable. And yes, this means hospitals will need GPUs too.

Applied technology in surgery

The session I found most fascinating was by Prof Gao Yujia of NUHS, whose clear grasp of technology was amply evident in his role as the group's assistant group CTO. He walked through various uses his team has developed for the Microsoft HoloLens, complete with photos and videos that brought each application to life.

The headline application involved overlaying 3D holograms converted from CT and MRI scans directly onto the patient on the operating table. A colleague successfully removed a rib cage tumour this way, operating with the holographic guide visible in real time. Seeing the footage, it was hard not to appreciate how far surgical technology has come.

Another striking example involved a living donor liver transplant for a baby. The team used a digital twin to plan the procedure, virtually placing the mother's liver segment inside the baby to check sizing. Extended reality was then used during surgery to shave the liver to precisely match the pre-planned dimensions.

Prof Gao also demonstrated how the HoloLens's built-in infrared camera, paired with a GPU-powered convolutional neural network running elsewhere on the network, can identify veins beneath the skin in real time. Interestingly, the hospital's network initially slowed down too much during office hours to support this. The deployment of a private 5G network, which took some months to plan and install, solved the latency problem.

OpenClaw Server

At the exhibition area, I also spotted the HuaKun OpenClaw server, a liquid-cool desktop that supports up to 20 simultaneous sessions with two onboard Huawei AI accelerators.